This article appeared in its original form on the Queen’s Gazette website.

Queen’s University researchers receive funding to develop a next generation medical simulation platform.

As a teaching strategy, simulation-based training has been around a long time. From aviation and space flight to the military, from law to policing, simulation has been used to create learning environments that reflect the real world, without putting the learner or other participants at risk.

A multidisciplinary group of faculty and post-doctoral researchers from the faculties of Engineering and Applied Science, Health Sciences, Arts and Science, and Education, in partnership with Queen’s University’s Ingenuity Labs Research Institute, has received a $850,000 grant from the Department of National Defence’s IDEaS fund to advance the development of intelligently adaptive augmented reality (overlaying virtual images, audio or entire scenes within a real world context) and virtual reality (a completely constructed virtual space) simulations.

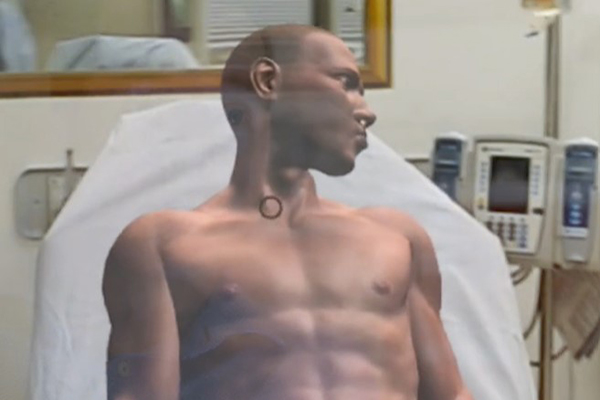

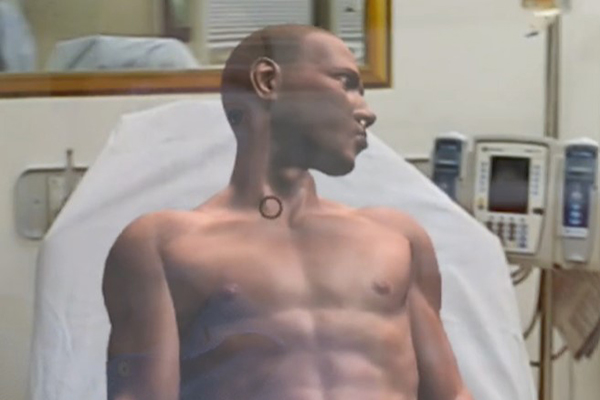

The group’s goal is to create a next generation medical simulation platform that creates intelligent, compelling, life-like, and adaptive simulation environments that can respond to each individual learner.

“Medical simulation is becoming a critical part of our teaching toolbox,” explains Daniel Howes, Professor, Critical Care Medicine and director of the Clinical Simulation Centre (CSC) at Queen’s University. “Still, we’re behind other disciplines in using simulation to help close the gap between clinical knowledge and clinical practice. In aviation, for example, 80 per cent of their training is simulation based, but in medicine, it’s currently about 20 per cent.”

Research like this is why the CSC, Canada’s first virtual reality medical training facility, was created. But the ability to make simulation more lifelike and accessible is only part of the objective of the grant.

“Research shows that to be effective, the complexity of the simulation must match the learner’s level of expertise and cognitive capacity or load, and right now, most simulations are not designed that way,” says Adam Szulewski, Associate Professor, Emergency Medicine. “We know that, to maximize learning, we have to hit the sweet spot for a given learner in terms of simulation complexity. The holy grail is a system that can adapt to the learner on the fly.”

Traditionally, learner performance in a simulation was observed by experts who could then decide about their performance against standard benchmarks. Previously, there was no way to investigate the mind of the learner to really understand how they were reacting to the challenges in the simulation.

All of that has changed with the availability of real-time, wearable sensors such as electrocardiograms (ECG), electroencephalograms (EEG), and eye-trackers, in combination with artificial intelligence. The data these generate can be used by deep neural networks and machine learning to accurately identify the learner’s expertise, cognitive load, emotional state, and level of engagement with the simulation.

“We have the chance to completely redefine the simulation learning paradigm,” says Paul Hungler, Assistant Professor, Chemical Engineering. “From a static relationship based on simple behaviours to a dynamic partnership, in which the simulation platform can identify and immediately respond to a learner by changing the complexity of the simulation. That’s revolutionary.”

The proposed platform demands expertise in engineering and software to realize the augmented / virtual reality and AI / deep learning components of the project, while the medical simulation design requires medical, cognitive and educational expertise.

“As a research institute with a mandate specifically seeking to explore and integrate artificial intelligence, robotics and human-machine interaction, the Ingenuity Lab is perfectly positioned to support this type of deeply interdisciplinary project,” states Ramzi Asfour, Associate Director.

The research team has formed SIMIAN (Simulation and Intelligent Adaptivity Network) to support the advancement of this type of dynamic simulation-based learning technology.

“With augmented and virtual reality, we can significantly reduce the cost of simulation while simultaneously increasing its realism and accessibility,” says Dr. Howes. “We’re really excited about the potential of this technology.”